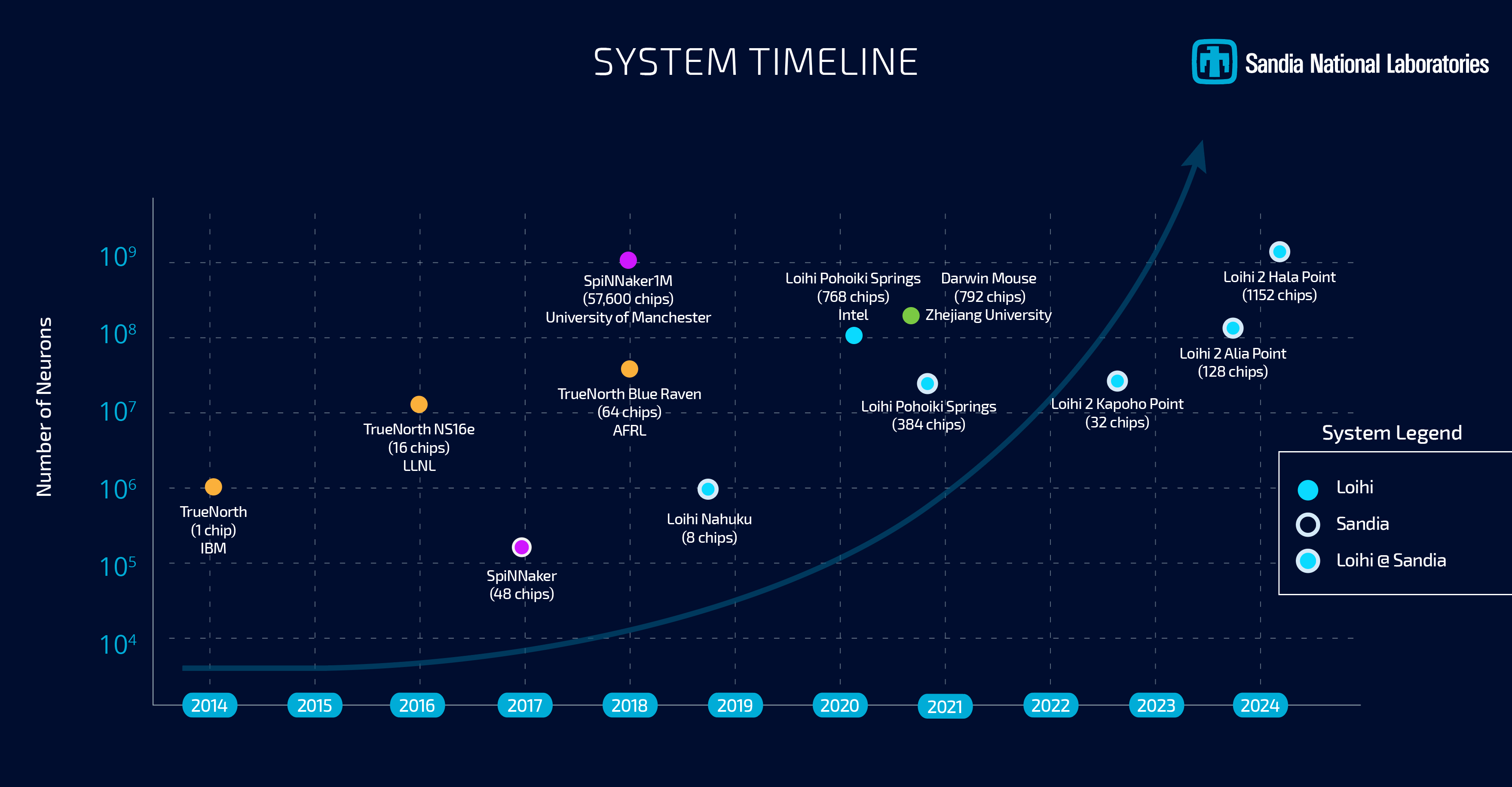

Whereas neuromorphic computing stays below analysis in the interim, efforts into the sphere have continued to develop over time, as have the capabilities of the specialty chips which have been developed for this analysis. Following these traces, this morning Intel and Sandia Nationwide Laboratories are celebrating the deployment of the Hala Level neuromorphic system, which the 2 imagine is the best capability system on the earth. With 1.15 billion neurons total, Hala Level is the biggest deployment but for Intel’s Loihi 2 neuromorphic chip, which was first introduced on the tail-end of 2021.

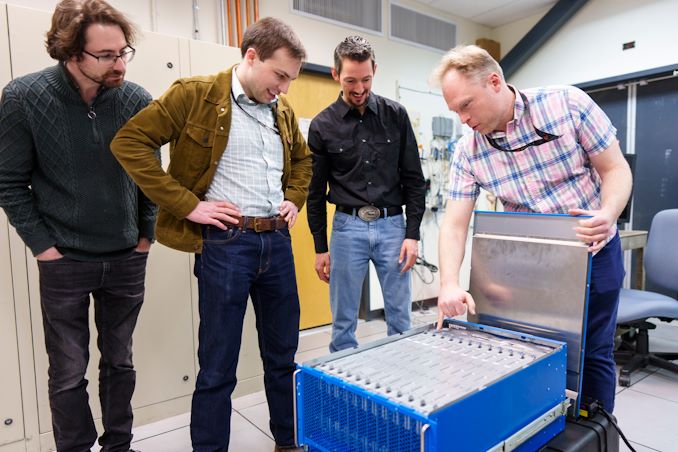

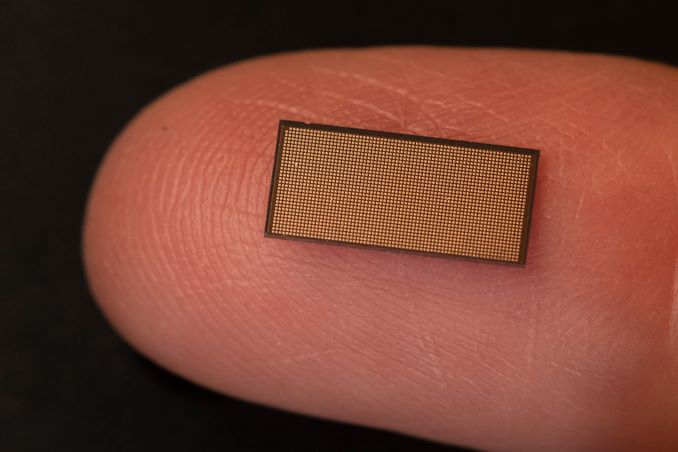

The Hala Level system incorporates 1152 Loihi 2 processors, every of which is able to simulating 1,000,000 neurons. As famous again on the time of Loihi 2’s launch, these chips are literally fairly small – simply 31 mm2 per chip with 2.3 billion transistors every, as they’re constructed on the Intel 4 course of (one of many solely different Intel chips to take action, in addition to Meteor Lake). In consequence, the whole system is equally petite, taking over simply 6 rack models of area (or as Sandia likes to check it to, in regards to the dimension of a microwave), with an influence consumption of two.6 kW. Now that it’s on-line, Hala Level has dethroned the SpiNNaker system as the biggest disclosed neuromorphic system, providing admittedly only a barely bigger variety of neurons at lower than 3% of the 100 kW British system.

A Single Loihi 2 Chip (31 mm2)

Hala Level can be changing an older Intel neuromorphic system at Sandia, Pohoiki Springs, which relies on Intel’s first-generation Loihi chips. By comparability, Hala Level affords ten-times as many neurons, and upwards of 12x the efficiency total,

Each neuromorphic techniques have been procured by Sandia with a purpose to advance the nationwide lab’s analysis into neuromorphic computing, a computing paradigm that behaves like a mind. The central thought (for those who’ll excuse the pun) is that by mimicking the wetware writing this text, neuromorphic chips can be utilized to resolve issues that standard processors can’t resolve right now, and that they’ll accomplish that extra effectively as effectively.

Sandia, for its half, has stated that it will likely be utilizing the system to take a look at large-scale neuromorphic computing, with work working on a scale effectively past Pohoiki Springs. With Hala Level providing a simulated neuron rely very roughly on the extent of complexity of an owl mind, the lab believes {that a} larger-scale system will lastly allow them to correctly exploit the properties of neuromorphic computing to resolve actual issues in fields resembling machine physics, pc structure, pc science and informatics, transferring effectively past the easy demonstrations initially achieved at a smaller scale.

One new focus from the lab, which in flip has caught Intel’s consideration, is the applicability of neuromorphic computing in the direction of AI inference. As a result of the neural networks themselves behind the present wave of AI techniques are trying to emulate the human mind, in a way, there’s an apparent diploma of synergy with the brain-mimicking neuromorphic chips, even when the algorithms differ in some key respects. Nonetheless, with vitality effectivity being one of many main advantages of neuromorphic computing, it’s pushed Intel to look into the matter additional – and even construct a second, Hala Level-sized system of their very own.

In response to Intel, of their analysis on Hala Level, the system has reached efficiencies as excessive as 15 TOPS-per-Watt at 8-bit precision, albeit whereas utilizing 10:1 sparsity, making it greater than aggressive with current-generation business chips. As an added bonus to that effectivity, the neuromorphic techniques don’t require in depth knowledge processing and batching prematurely, which is generally essential to make environment friendly use of the excessive density ALU arrays in GPUs and GPU-like processors.

Maybe essentially the most attention-grabbing use case of all, nonetheless, is the potential for with the ability to use neuromorphic computing to allow augmenting neural networks with further knowledge on the fly. The thought behind this being to keep away from re-training, as present LLMs require, which is extraordinarily pricey because of the in depth computing assets required. In essence, that is taking one other web page from how brains function, permitting for steady studying and dataset augmentation.

However for the second, a minimum of, this stays a topic of educational research. Finally, Intel and Sandia need techniques like Hala Level to result in the event of economic techniques – and presumably, at even bigger scales. However to get there, researchers at Sandia and elsewhere will first want to make use of the present crop of techniques to higher refine their algorithms, in addition to higher determine the right way to map bigger workloads to this model of computing with a purpose to show their utility at bigger scales.CP