Bandwidth is a straightforward quantity that folks assume they perceive. The larger the quantity, the quicker the storage.

Nope.

Leaving apart that many client bandwidth numbers are bogus – hyperlink velocity shouldn’t be storage velocity – precise efficiency isn’t depending on pure bandwidth.

Bandwidth is a handy metric, simply measured, however not the essential think about storage efficiency. What most storage efficiency instruments measure is the bandwidth with massive requests. Why? As a result of small requests do not use a lot bandwidth.

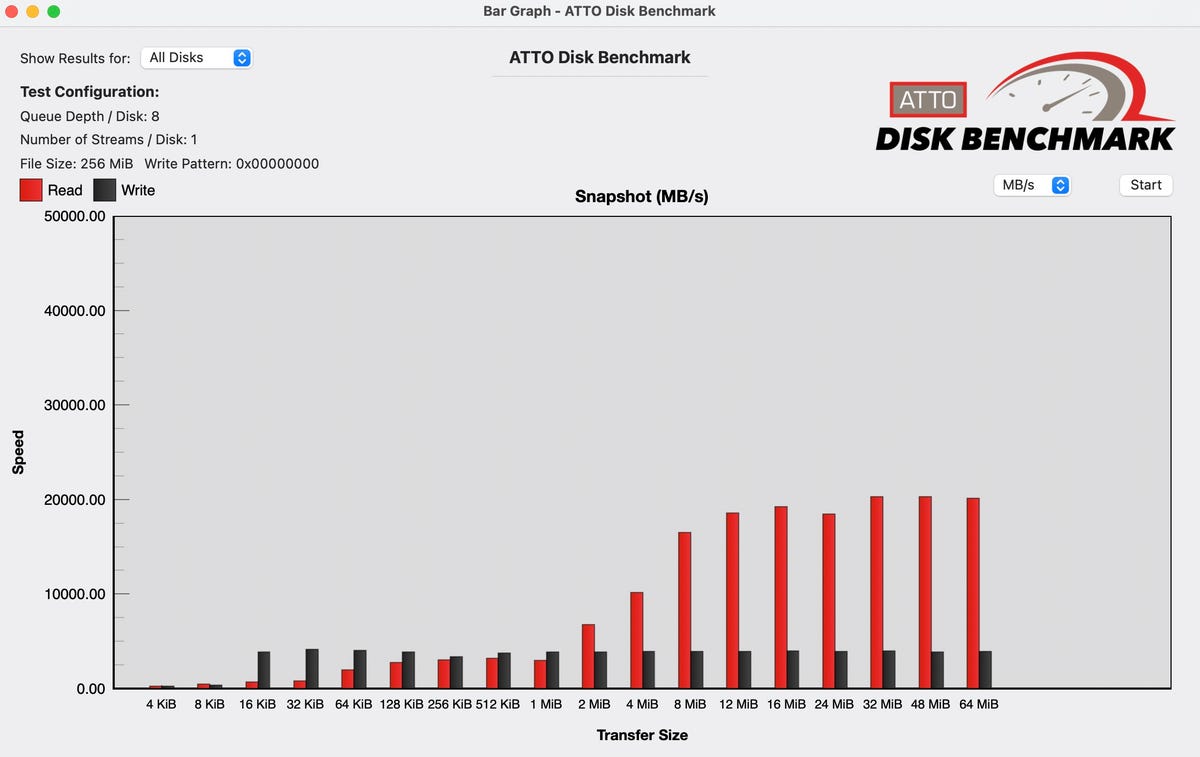

The next graphic, produced with a software from ATTO storage, illustrates this. On the X axis is the bandwidth and on the Y axis is the entry dimension. The correlation is apparent: small requests do not use a lot bandwidth.

Drive benchmark on a quick inner PCIe SSD.

Robin Harris

However why would not the CPU situation extra I/O requests to absorb that unused bandwidth? As a result of each I/O takes time and sources – context switches, reminiscence administration and metadata updates, and extra – to finish.

There plenty of small requests even in the event you’re modifying large video recordsdata. That is as a result of behind the scenes, the CPU’s Reminiscence Administration Unit (MMU) is continually swapping out least-used pages and swapping in no matter knowledge or program segments your workload requires.

These pages are fastened in dimension at 4KB for Home windows and 16KB for current variations of macOS. When you have plenty of bodily reminiscence there’s much less paging initially after a reboot, however over time as you run extra packages and open extra tabs, bodily reminiscence fills up and the swapping begins.

Thus a lot of the I/O site visitors to storage is not underneath your direct management. Nor does it require a lot bandwidth.

What’s actually vital?

Latency. How rapidly does a storage gadget service a request.

There’s an apparent motive for latency’s significance, and one other refined – however practically as vital – motive.

Let’s begin with the apparent.

Say you had a storage gadget with infinite bandwidth however every entry took 10 milliseconds. That gadget may deal with 100 accesses per second (1000ms/10ms = 100). If the common entry was 16K, you’ll have a complete bandwidth of 1,600,000 KB per second – lower than the nominal 500 Mbits/sec USB 2.0 provides – losing an nearly infinite quantity of bandwidth.

A ten ms entry is round what the common exhausting drive handles, which is why storage distributors packaged up a whole bunch, even 1000’s, of HDDs to maximise accesses. However that was the unhealthy previous days.

In the present day’s high-performance SSDs have latencies effectively into the microsecond vary, that means they’ll deal with as many I/Os as one million greenback storage array did 15 years in the past. Provided that you had infinite 16KB accesses would you be restricted by the bandwidth of the connection.

The refined motive for latency’s significance is extra difficult. As an instance you’ve 100 storage units with a 10ms entry time, and your CPU is issuing 10,000 I/Os per second (IOPS).

Your 100 storage units can deal with 10,000 IOPS, so no downside, proper? Incorrect. Since every I/O takes 10 ms, your CPU is juggling 100 uncompleted I/Os. Drop the latency to 1ms and the CPU has solely 10 uncompleted I/Os.

If there’s an I/O burst, the variety of uncompleted I/Os may cause the web page map to exceed accessible on-board reminiscence and drive it to start out paging. Which, since paging is already gradual, is a Unhealthy Factor.

The Take

The issue with latency as a efficiency metric is twofold: it isn’t straightforward to measure; and, few perceive its significance. However folks have been shopping for and utilizing lower-latency interfaces for many years, in all probability with out understanding why they had been higher than cheaper, and nominally as quick, interfaces.

For instance, FireWire’s benefit over USB 2, though the bandwidth numbers had been roughly comparable, was latency. USB 2 – 500 Mbits/sec – used a polling entry protocol with greater latency. A FireWire drive would all the time appear snappier than the identical drive over USB, due to the lower-latency protocol. You may boot a Mac off a USB 2 drive, however working apps was lifeless gradual.

Equally, Thunderbolt has all the time been optimized for latency, which is one motive it prices extra.

Feedback welcome. That is prep for one more piece the place I take a look at USB 3.0 and Thunderbolt drives. Keep tuned.